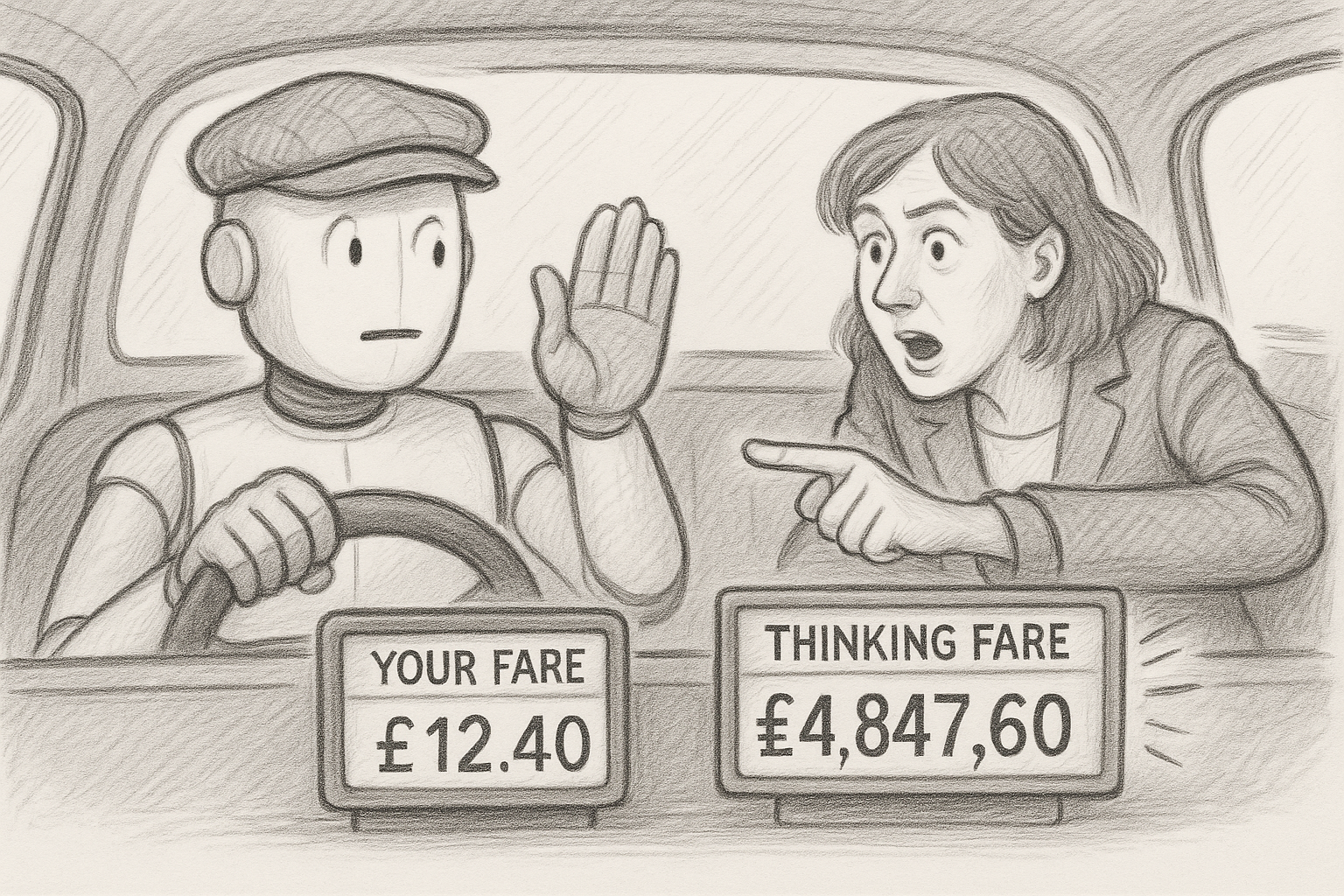

"If you cannot see what a single user action costs, you cannot govern spend."

When OpenAI launched its API in 2020, the pricing was simple. You sent text in. You got text back. You paid for what you saw. That contract between provider and customer made sense — until the models started thinking.

OpenAI's o1 model, released in late 2024, introduced reasoning tokens. These are internal chain-of-thought steps the model generates to work through a problem before producing a visible answer. They are billed. They are not shown to the user. By early 2025, o3 could consume up to fifty times the tokens of a standard GPT-4o query on a complex task, with the vast majority being reasoning tokens the customer never sees.

The pricing model did not change. The technology did.

This matters because enterprise AI is no longer a chatbot answering questions. It is an agent. Microsoft's Copilot for Microsoft 365 had over 400 million monthly active users by early 2025, according to Microsoft's earnings disclosures. Each time a user asks Copilot to summarise a thread or draft a response, the system makes multiple model calls — parsing, reasoning, generating, checking. Each call consumes tokens. None of this internal choreography is visible to the user or the budget holder.

Anthropic's Claude operates similarly in agentic mode. A single user request can trigger dozens of API calls as the agent plans its approach, calls external tools, processes results, and synthesises a response. The user sees one answer. The bill reflects dozens of intermediate steps they never asked for.

OpenAI cut GPT-4o pricing by roughly half between launch and early 2025. Competition from DeepSeek, Meta's Llama, and Mistral drove prices lower still. Yet Andreessen Horowitz's 2025 enterprise survey found that 63 per cent of companies increased their AI infrastructure spend year over year, despite cheaper per-token rates. Unit prices fell. Total consumption outran them.

Flexera's 2025 State of the Cloud report found that 82 per cent of enterprises ranked cloud spend management as a top challenge, with AI costs identified as the fastest-growing and least predictable category. The FinOps Foundation — the industry body for cloud financial management — noted that its existing frameworks were inadequate for AI workloads because token-based costs do not correlate linearly with user activity.

The tools built to manage cloud spending were designed for virtual machines and storage. They assume predictable, resource-based consumption. AI pricing is consumption-based, but the consumption is hidden and non-linear. A simple question costs almost nothing. A complex reasoning task with tool use can cost orders of magnitude more. No finance team can forecast this from a usage dashboard.

Providers are aware. OpenAI introduced prompt caching and batch pricing at reduced rates. Anthropic offers similar mechanisms. Google provides committed-use discounts on Vertex AI. These are useful but incremental. Caching helps when prompts repeat. Batch pricing helps when work can be deferred. Neither addresses the structural problem: reasoning models consume an unpredictable number of tokens per task, and the user has no visibility into that consumption while it happens.

Gartner predicted in late 2024 that more than 30 per cent of enterprise AI projects would be scaled back or abandoned by 2026 due to unexpected costs. That forecast predates the reasoning-model era that arrived in force in 2025. Stanford's AI Index 2025 found that reasoning models cost more per task even as standard models get cheaper per token. The models are getting cheaper and more expensive at the same time, depending on which capability you use.

The first move for any enterprise is contract review. Most AI agreements were written for a simpler technology. Check whether yours specify pricing for reasoning tokens separately from input and output tokens. If they do not, you are exposed to cost escalation with no contractual lever to manage it. The second move is design governance. If your teams are building agentic workflows, the number of model calls per user action is a design choice, not a fixed cost. Require cost estimates as part of solution design, the same way you require security reviews. The third is consumption visibility. Demand per-task cost breakdowns from your providers — not just monthly token counts. If you cannot see what a single user action costs, you cannot govern spend.

Token pricing made sense when every token was visible. It does not make sense when the most expensive tokens — the reasoning ones — are the ones you never see.

References

[1] OpenAI, “Learning to Reason with LLMs,” September 2024 — openai.com

[2] Andreessen Horowitz, “AI in the Enterprise 2025” — a16z.com

[3] Stanford HAI, “AI Index Report 2025,” April 2025 — aiindex.stanford.edu

[4] Flexera, “2025 State of the Cloud Report” — flexera.com

[5] FinOps Foundation, “State of FinOps 2025” — finops.org

[6] Microsoft FY2025 Q2 Earnings, January 2025 — microsoft.com